Blah blah Microservices blah blah

As a closing keynote on the second day of Jfokus, Jonas Bonér took the stage under the very clarifying title “Blah blah Microservices blah blah”, which turned out to mean “From microliths to microsystems”.

As a first observation, he stated that no-one really likes microservices. They are kind of a necessary evil - because “doing” microservices comes at a cost. In fact, microservices are just a specialisation of an older concept called distributed systems. But what we often build are microliths - an application that might be called a microservice except for the fact that it lives alone. No failover, no resilience, nothing. But just like actors, microservices should come in systems. And just as important, microservices should come as systems, designed as a distributed system, which each microservice focussing on a single responsibility.

Just like reality

That means, as a consequence, to embrace the harsh reality of failure, delays, unavailability and the like. It’s just like reality! As Pat Helland once said: a message is never about “now”, but about the past, since it needed time to get from the sender to the receiver. At the very best, this information travels with the speed of light, but often it is slower. Still, we’re holding on to the thought that a message we received is about now, but it is always from the past. And although we would like our systems to behave according to Amdahl’s Law, it often is more like Gunther’s Law. This law states that adding more resources to a system might actually slow it down, due to the cost of maintaining contention and coherency. To quote Pat Helland again: in a distributed system you can either know where the work is done or when the work is done - but you can’t know both.

Strive to minimize coupling and communication

So that means we need to actually believe in the eventually consistency paradigm, instead of chatting to other systems to see if they are consistent. It’s not only about non-blocking I/O, it’s also (or maybe even more) about non-blocking requests. It should be OK for communicating systems to actually just rely on the other side doing their work somewhere in time. As a result, you should always apply back-pressure to prevent fast systems from overloading slower ones. There are two tools that may be of help here:

- Reactive design

- Events-first DDD

Reactive design

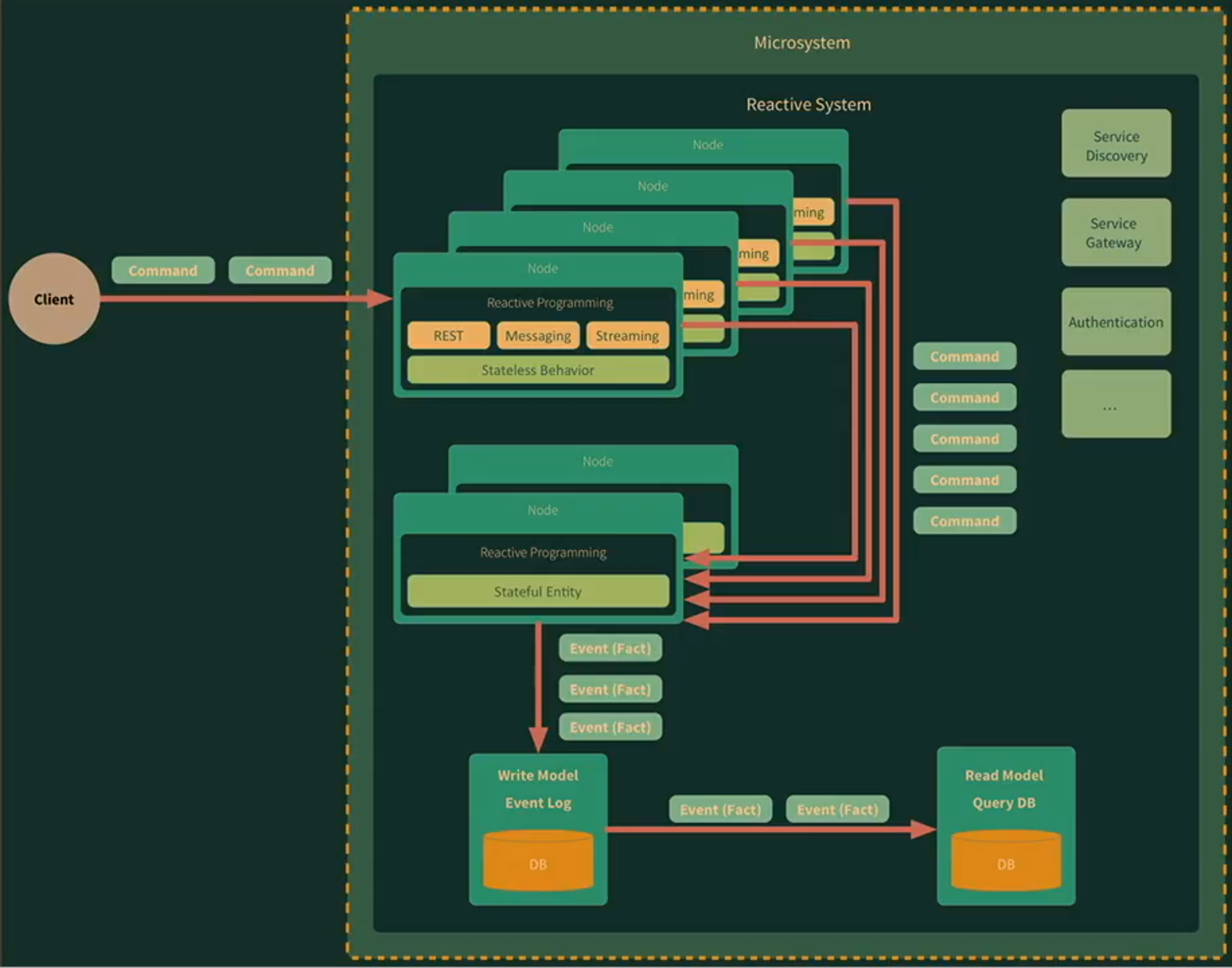

Of course, reactive is quite a buzzword nowadays. We must not confuse reactive programming with reactive systems. Reactive programming is about making individual instances of services performant and efficient. Reactive systems on the other hand is about distributed systems, compositions of services, that are elastic and resilient. In general, both are about embracing asynchrony - non-blocking I/O, non-blocking communication - letting it go! The good news is that this helps minimizing contention on shared resources, leading to more efficient use of these resources.

Another important design principle when it comes to reactive systems is back pressure. It is basically a way to prevent faster systems from flooding slower ones, by allowing the slower ones to indicate that they are having enough work to do. Instead of faster systems pushing work (or messages) to slower ones, it lets the slower ones pull work whenever they are capable of executing that work. But again, this only works if all services in a distributed system adhere to it. Currently, a lot of frameworks implement these principles, like Akka Streams, RxJava, Vert.x, but with Java 9 the Flow API is coming that also follows these ideas.

However, the de-facto standard for inter-service communication seems to be HTTP - a blocking, synchronous way of exchanging messages. In the light of the foregoing discussion that suddenly feels like a bad default. Point-to-point communication, in this scenario, can often become a mess with lines being drawn all over the place. Decoupling this communication by employing publish/subscribe patterns can help to achieve higher elasticity and resilience.

But again, as long as the services are single-instance services, they are not resilient or scalable at all. To achieve both of these characteristics, we must separate stateless behavior from stateful entities, so they can be scaled individually. As long as the behavior is truly stateless, it allows for easy scaling. That’s what serverless software, or lambda arhictecture, or functions as a service, is all about; it pushes the state out of the behavior so the behavior can be scaled almost infinitely. Still, a truly stateless architecture is something that we cannot achieve - we’re just making it someone else’s problem. As a consequence, it is harder to control things like integrity, scalability and availability of the persistent state.

Many of the abstractions that we used in computer science until now, like EJBs or RPC, are leaky abstractions. Eventually, they will make you run into a wall, because they abstract way too much. By embracing reality instead of hiding it, we are forced to properly handle things that can and will happen when running software, like partial failures or other difficult situations. Message-driven systems are a better abstraction of that reality and allow you to model the sofware in such a way that it can deal with that reality.

Another way to look at it is the distinction that Pat Helland makes: inside data or state, outside data or history and commands that are used to express what needs to be done with either form of data.

Events-first DDD

Domain-driven design is a technique that is often used when designing reactive systems. In its events-first variant, it doesn’t focus on the things (nouns), but on events, and let them define the bounded context. Those events represent facts, something that happened in the past and is now immutable. Apart from new facts, things that didn’t happen before, there are also deduced facts, which are derived from other facts. To discover these facts, a common technique is to do event storming where you brainstorm about things that happen inside some context. Now, if you understand the causality between facts, you know how events flow through your system(s).

State, on the other hand, is mutable, as long as it is contained within certain boundaries, i.e. a single instance of a service. The rest of the world just doesn’t know about this state, because it cannot be shared. The result of processing facts may lead to other facts, that can be publised to the outside world. That leads to the statement that “CRUD is dead”; we just need the CR, not the U or the D.

An event log is just an ordered log of every event that happened in a system. And it perfectly fits this model of new facts happening and deduced facts being discovered. Not only is that valuable in terms of auditing and debugging, it is also particularly interesting for replication and fault tolerance. Replaying the event log on second machine will inevitably lead to the same state as it earlier did on the first machine.

That also plays nicely with CQRS, where reads and writes are untangled from each other. The write side will just add events to the event log, whereas the read side might have its own representation of the facts that are decuded from those events in the form of persisted entities e.g. in a relational database. Each of the sides may be scaled individually, depending on the load for both sides of the system. With these kinds of architecture, you have systems that are both very resilient and very scalable:

Transactions

Finally, the question might arise: “how do we deal with transactions in this architeceture?”; the counter-question should be: “do you really need them?” If you cannot coordinate such transactions in a distributed world, one approach could be to use a protocol of guessing, apologising and compensating. Again, this is much like how we act and how things work in real life! As an example, think about airlines; they often make a guess about a certain number of passengers not showing up for a flight; and if they do, the airline apologises to those who are overbooked and compensate them by offering an upgrade in the next flight, or something else.

comments powered by Disqus